As a part of right this moment’s Worldwide Supercomputing 2021 (ISC) bulletins, Intel is showcasing that will probably be launching a model of its upcoming Sapphire Rapids (SPR) Xeon Scalable processor with high-bandwidth reminiscence (HBM). This model of SPR-HBM will come later in 2022, after the primary launch of Sapphire Rapids, and Intel has acknowledged that will probably be a part of its basic availability providing to all, slightly than a vendor-specific implementation.

Hitting a Reminiscence Bandwidth Restrict

As core counts have elevated within the server processor area, the designers of those processors have to make sure that there's sufficient knowledge for the cores to allow peak efficiency. This implies growing massive quick caches per core so sufficient knowledge is shut by at excessive pace, there are excessive bandwidth interconnects contained in the processor to shuttle knowledge round, and there's sufficient major reminiscence bandwidth from knowledge shops positioned off the processor.

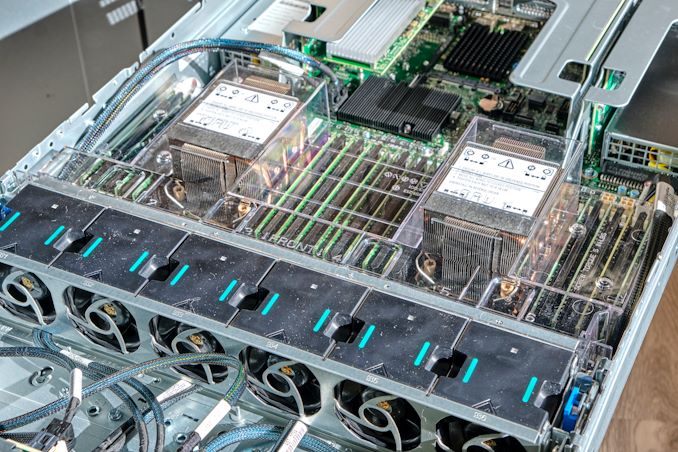

Our Ice Lake Xeon Evaluation system with 32 DDR4-3200 Slots

Right here at AnandTech, we've got been asking processor distributors about this final level, about major reminiscence, for some time. There's solely a lot bandwidth that may be achieved by frequently including DDR4 (and shortly to be DDR5) reminiscence channels. Present eight-channel DDR4-3200 reminiscence designs, for instance, have a theoretical most of 204.Eight gigabytes per second, which pales compared to GPUs which quote 1000 gigabytes per second or extra. GPUs are capable of obtain greater bandwidths as a result of they use GDDR, soldered onto the board, which permits for tighter tolerances on the expense of a modular design. Only a few major processors for servers have ever had major reminiscence be built-in at such a degree.

Intel Xeon Phi 'KNL' with Eight MCDRAM Pads in 2015

One of many processors that was once constructed with built-in reminiscence was Intel’s Xeon Phi, a product discontinued a few years in the past. The idea of the Xeon Phi design was a number of vector compute, managed by as much as 72 primary cores, however paired with 8-16 GB of on-board ‘MCDRAM’, linked by way of 4-Eight on-board chiplets within the bundle. This allowed for 400 gigabytes per second of cache or addressable reminiscence, paired with 384 GB of major reminiscence at 102 gigabytes per second. Nonetheless, since Xeon Phi was discontinued, no major server processor (not less than for x86) introduced to the general public has had this kind of configuration.

New Sapphire Rapids with Excessive-Bandwidth Reminiscence

Till subsequent 12 months, that's. Intel’s new Sapphire Rapids Xeon Scalable with Excessive-Bandwidth Reminiscence (SPR-HBM) will likely be coming to market. Fairly than cover it away to be used with one explicit hyperscaler, Intel has acknowledged to AnandTech that they're dedicated to creating HBM-enabled Sapphire Rapids out there to all enterprise prospects and server distributors as nicely. These variations will come out after the primary Sapphire Rapids launch, and entertain some attention-grabbing configurations. We perceive that this implies SPR-HBM will likely be out there in a socketed configuration.

Intel states that SPR-HBM can be utilized with customary DDR5, providing an extra tier in reminiscence caching. The HBM may be addressed straight or left as an computerized cache we perceive, which might be similar to how Intel's Xeon Phi processors may entry their excessive bandwidth reminiscence.

Alternatively, SPR-HBM can work with none DDR5 in any respect. This reduces the bodily footprint of the processor, permitting for a denser design in compute-dense servers that don't rely a lot on reminiscence capability (these prospects had been already asking for quad-channel design optimizations anyway).

The quantity of reminiscence was not disclosed, nor the bandwidth or the know-how. On the very least, we anticipate the equal of as much as 8-Hello stacks of HBM2e, as much as 16GB every, with 1-Four stacks onboard resulting in 64 GB of HBM. At a theoretical high pace of 460 GB/s per stack, this is able to imply 1840 GB/s of bandwidth, though we will think about one thing extra akin to 1 TB/s for yield and energy which might nonetheless give a sizeable uplift. Relying on demand, Intel might fill out totally different variations of the reminiscence into totally different processor choices.

One of many key parts to contemplate right here is that on-package reminiscence may have an related energy value throughout the bundle. So for each watt that the HBM requires contained in the bundle, that's one much less watt for computational efficiency on the CPU cores. That being mentioned, server processors typically don't push the boundaries on peak frequencies, as an alternative choosing a extra environment friendly energy/frequency level and scaling the cores. Nonetheless HBM on this regard is a tradeoff - if HBM had been to take 10-20W per stack, 4 stacks would simply eat into the facility price range for the processor (and that energy price range needs to be managed with extra controllers and energy supply, including complexity and price).

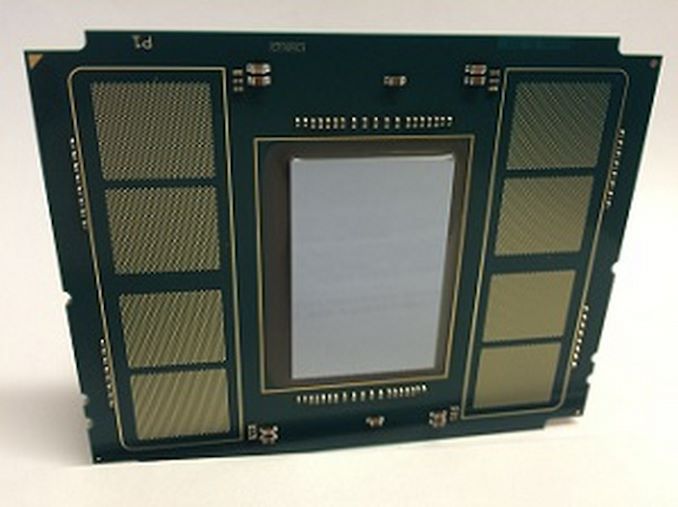

One factor that was complicated about Intel’s presentation, and I requested about this however my query was ignored throughout the digital briefing, is that Intel retains placing out totally different bundle photos of Sapphire Rapids. Within the briefing deck for this announcement, there was already two variants. The one above (which truly seems to be like an elongated Xe-HP bundle that somebody put a brand on) and this one (which is extra sq. and has totally different notches):

There have been some unconfirmed leaks on-line showcasing SPR in a 3rd totally different bundle, making all of it complicated.

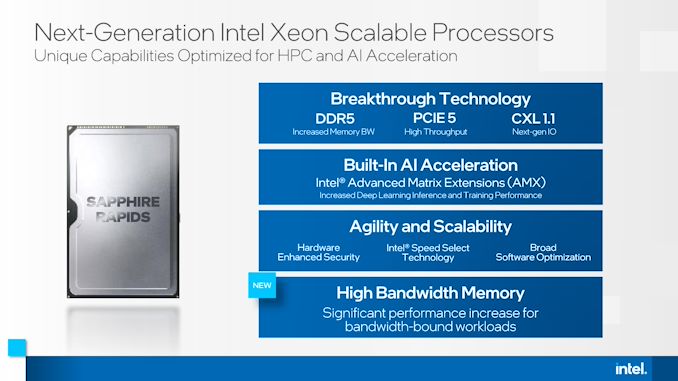

Sapphire Rapids: What We Know

Intel has been teasing Sapphire Rapids for nearly two years because the successor to its Ice Lake Xeon Scalable household of processors. Constructed on 10nm Enhanced SuperFin, SPR will likely be Intel’s first processors to make use of DDR5 reminiscence, have PCIe 5 connectivity, and help CXL 1.1 for next-generation connections. Additionally on reminiscence, Intel has acknowledged that Sapphire Rapids will help Crow Move, the subsequent era of Intel Optane reminiscence.

For core know-how, Intel (re)confirmed that Sapphire Rapids will likely be utilizing Golden Cove cores as a part of its design. Golden Cove will likely be central to Intel's Alder Lake shopper processor later this 12 months, nonetheless Intel was fast to level out that Sapphire Rapids will supply a ‘server-optimized’ configuration of the core. Intel has performed this prior to now with each its Skylake Xeon and Ice Lake Xeon processors whereby the server variant typically has a unique L2/L3 cache construction than the patron processors, in addition to a unique interconnect (ring vs mesh, mesh on servers).

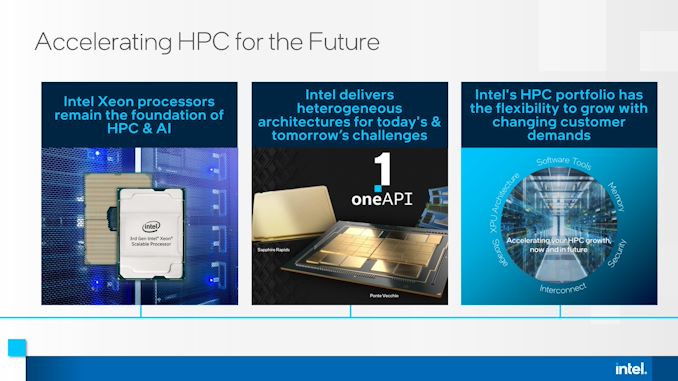

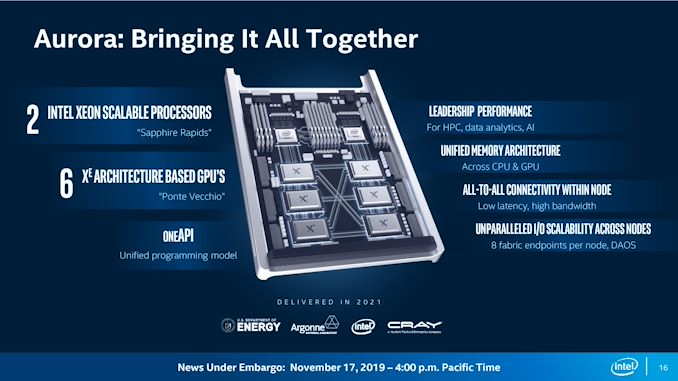

Sapphire Rapids would be the core processor on the coronary heart of the Aurora supercomputer at Argonne Nationwide Labs, the place two SPR processors will likely be paired with six Intel Ponte Vecchio accelerators, which may even be new to the market. Right this moment's announcement confirms that Aurora will likely be utilizing the SPR-HBM model of Sapphire Rapids.

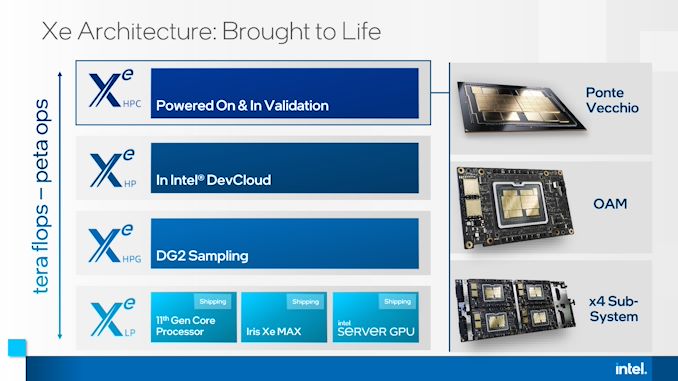

As a part of this announcement right this moment, Intel additionally acknowledged that Ponte Vecchio will likely be extensively out there, in OAM and 4x dense kind elements:

Sapphire Rapids may even be the primary Intel processors to help Superior Matrix Extensions (AMX), which we perceive to assist speed up matrix heavy workflows reminiscent of machine studying alongside additionally having BFloat16 help. This will likely be paired with updates to Intel’s DL Increase software program and OneAPI help. As Intel processors are nonetheless extremely popular for machine studying, particularly coaching, Intel needs to capitalize on any future progress on this market with Sapphire Rapids. SPR may even be up to date with Intel’s newest {hardware} based mostly safety.

It's extremely anticipated that Sapphire Rapids may even be Intel’s first multi compute-die Xeon the place the silicon is designed to be built-in (we’re not counting Cascade Lake-AP Hybrids), and there are unconfirmed leaks to recommend that is the case, nonetheless nothing that Intel has but verified.

The Aurora supercomputer is anticipated to be delivered by the tip of 2021, and is anticipated to not solely be the primary official deployment of Sapphire Rapids, but additionally SPR-HBM. We anticipate a full launch of the platform someday within the first half of 2022, with basic availability quickly after. The precise launch of SPR-HBM past HPC workloads is unknown, nonetheless given these time frames, This autumn 2022 appears pretty affordable relying on how aggressive Intel needs to assault the launch in gentle of any competitors from different x86 distributors or Arm distributors. Even with SPR-HBM being provided to everybody, Intel might resolve to prioritize key HPC prospects over basic availability.

Posting Komentar

Posting Komentar