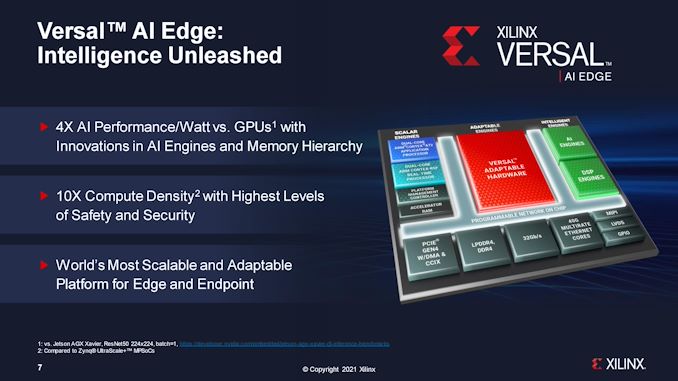

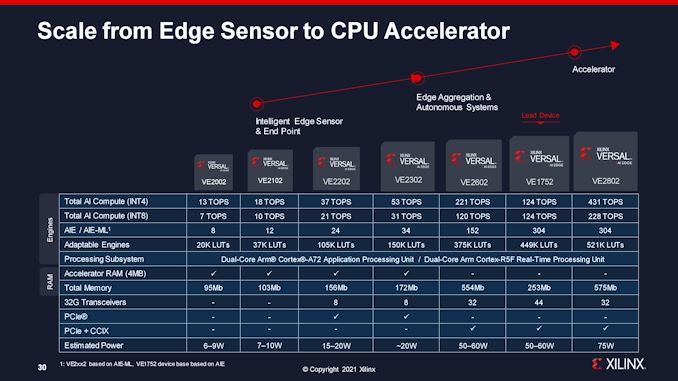

At the moment Xilinx is saying an growth to its Versal household, targeted particularly on low energy and edge gadgets. Xilinx Versal is the productization of a mix of many alternative processor applied sciences: programmable logic gates (FPGAs), Arm cores, quick reminiscence, AI engines, programmable DSPs, hardened reminiscence controllers, and IO – the advantages of all these applied sciences implies that Versal can scale from the excessive finish Premium (launched in 2020), and now all the way down to edge-class gadgets, all constructed on TSMC’s 7nm processes. Xilinx’s new Versal AI Edge processors begin at 6 W, all the best way as much as 75 W.

Going for the ACAP

A few years in the past, Xilinx noticed a change in its buyer necessities – regardless of being an FPGA vendor, clients wished one thing extra akin to a daily processor, however with the pliability with an FPGA. In 2018, the corporate launched the idea of an ACAP, an Adaptive Computing Acceleration Platform that supplied hardened compute, reminiscence, and IO like a standard processor, but in addition substantial programmable logic and acceleration engines from an FPGA. The primary high-end ACAP processors, constructed on TSMC N7, have been showcased in 2020 and featured giant premium silicon, some with HBM, for top efficiency workloads.

So fairly than having a design that was 100% FPGA, by transferring a few of that die space to hardened logic like processor cores or reminiscence, Xilinx’s ACAP design permits for a full vary of devoted standardized IP blocks at decrease energy and smaller die space, whereas nonetheless retaining a great portion of the silicon for FPGA permitting clients to deploy customized logic options. This has been vital within the development of AI, as algorithms are evolving, new frameworks are taking form, or totally different compute networks require totally different balances of sources. Having an FPGA on die, coupled with normal hardened IP, permits a single product set up to final for a few years as algorithms rebalance and get up to date.

Xilinx Versal AI Edge: Subsequent Technology

On that closing level about having an put in product for a decade and having to replace the algorithms, in no space is that extra true than with conventional ‘edge’ gadgets. On the ‘edge’, we’re speaking sensors, cameras, industrial programs, industrial programs – gear that has to final over its lengthy set up lifetime with no matter {hardware} it has in it. There are edge programs at present constructed on pre-2000 {hardware}, to provide you a scope of this market. In consequence, there's at all times a push to make edge gear extra malleable as wants and use instances change. That is what Xilinx is focusing on with its new Versal AI Edge portfolio – the flexibility to repeatedly replace ‘good’ performance in gear similar to cameras, robotics, automation, medical, and different markets.

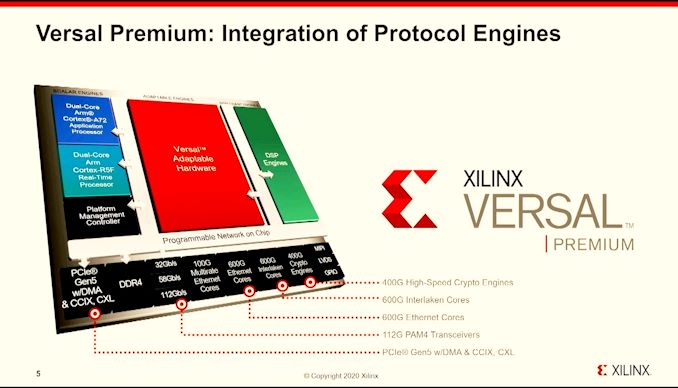

Xilinx’s conventional Versal system accommodates plenty of scalar engines (Arm A72 cores for functions, Arm R5 core for real-time), clever engines (AI blocks, DSPs), adaptable engines (FPGA), and IO (PCIe, DDR, Ethernet, MIPI). For the largest Versal merchandise, these are giant and highly effective, facilitated by a programmable community on chip. For Versal’s AI Edge platform, there are two new options into the combination.

First is using Accelerator SRAM positioned very near the scalar engines. Fairly than conventional caches, it is a devoted configurable scratchpad with dense SRAM that the engines can entry at low latency fairly than traversing throughout the reminiscence bus. Conventional caches use predictive algorithms to drag information from fundamental reminiscence, but when the programmer is aware of the workload, they will be sure that information wanted on the most latency crucial factors can already be positioned near the processor earlier than the predictors know what to do. This four MB block has a deterministic latency, enabling the real-time R5 to become involved as effectively, and provides 12.eight GB/s of bandwidth to the R5. It additionally has 35 GB/s bandwidth to the AI engines for information that should get processed in that path.

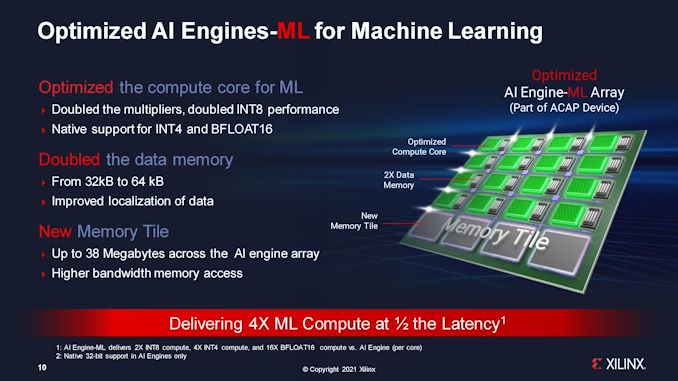

The opposite replace is within the AI Engines themselves. The unique Xilinx Versal {hardware} enabled each forms of machine studying: coaching and inference. These two workloads have totally different optimization factors for compute and reminiscence, and whereas it was vital on the massive chips to help each, these Edge processors will virtually completely be used for inference. In consequence, Xilinx has reconfigured the core, and is looking these new engines ‘AIE-ML’.

The only AIE-ML configuration, on the 6W processor, has eight AIE-ML engines, whereas the biggest has 304. What makes them totally different to the same old engines is by having double the native information cache per engine, further reminiscence tiles for international SRAM entry, and native help for inference particular information sorts, similar to INT4 and BF16. Past this, the multipliers are additionally doubled, enabling double INT8 efficiency.

The mix of those two options implies that Xilinx is claiming 4x efficiency per watt towards conventional GPU options (vs AGX Xavier), 10x the compute density (vs Zynq Ultrascale), and extra adaptability as AI workloads change. Coupled to this shall be further validation with help for a number of safety requirements in lots of the industrial verticals.

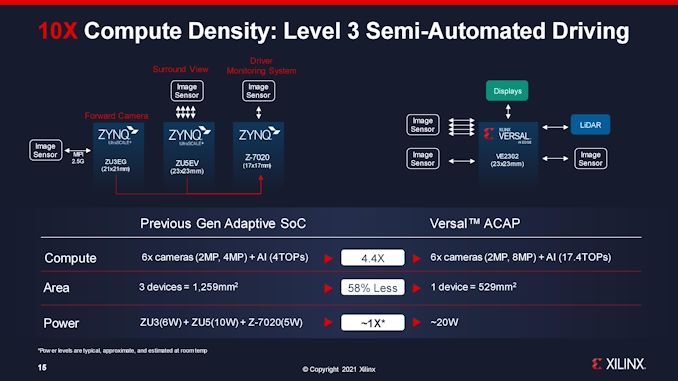

By way of our briefing with Xilinx, there was one explicit remark that stood out to me in gentle of the present international demand for semiconductors. All of it boils down to 1 slide, the place Xilinx in contrast its personal present automotive options for Stage three driving to its new answer.

On this state of affairs, to allow Stage three driving, the present answer makes use of three processors, totalling 1259 mm2 of silicon, after which past that reminiscence for every processor and such. The brand new Versal AI Edge answer replaces all three Zynq FPGAs, decreasing three processors all the way down to 1, happening to 529 mm2 of silicon for a similar energy, but in addition with 4x the compute capabilities. Even when an vehicle producer doubled up for redundancy, the brand new answer continues to be much less die space than the earlier one.

That is going to be a key characteristic of processor options as we go ahead – how a lot silicon is required to really get a platform to work. Much less silicon normally means much less price and fewer pressure on the semiconductor provide chain, enabling extra models to be processed in a set period of time. The trade-off is that giant silicon won't yield as effectively, or it won't be the optimum configuration of course of nodes for energy (and price in that regard), nonetheless if the business is ultimately restricted on silicon throughput and packaging, it's a consideration price making an allowance for.

Nevertheless, as is common within the land of FPGAs (or ACAPs), bulletins occur earlier and progress strikes a little bit slower. Xilinx’s announcement at present corresponds solely to the truth that documentation is offered at present, with pattern silicon accessible within the first half of 2022. A full testing and analysis equipment is coming within the second half of 2022. Xilinx is suggesting that clients within the AI Edge platform can begin prototyping at present with the Versal AI ACAP VCK190 Eval Equipment, and migrate.

Full specs of the AI Edge processors are within the slide under. The brand new accelerator SRAM is on the primary 4 processors, whereas AIE-ML is on all 2000-series components. Xilinx has indicated that every one AI Edge processors shall be constructed on TSMC's N7+ course of.

Associated Studying

Posting Komentar

Posting Komentar